After Google I/O and WWDC, what's next for my apps?

A breakdown of what will impact your apps

The two major developer conferences are now behind us and you are probably wondering "What's next for my apps and why should I care?" We're rounding up all the things we've discovered and will incorporate in our next SDK's release.

It's always exciting times when between May and June, the two major mobile platforms pack rooms with developers for a week and show off all the new goodies coming up in their platforms. Here's a breakdown of what will impact your apps when using our SDK.

Google I/O

Android O is coming! It's probably one of the biggest releases of Android and is packed with a complete overhaul for notifications. This means that we will change how things work in our SDK to accommodate these changes.

Background App Usage

Android O is going to be even more battery-friendly, which means your apps will not be allowed unlimited time in the background. We are making sure that our SDK will still work as it does now, without violating the constraints Android O puts on background processing.

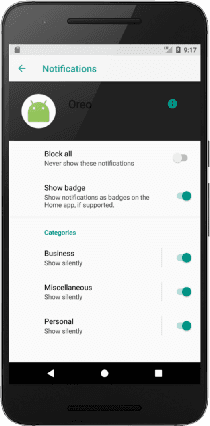

Notification Channels

Android O introduces channels to help users manage notifications. When targeting Android O, developers must create channels for each type of notifications apps will send. This will allow users to manage settings for each type of notifications in a unified and consistent new UI, where stuff like importance, sound, lights, vibration, lock screen and do not disturb can be adjusted individually for each type. As always we will give you ways of abstracting the implementation of these channels without disrupting too much your current setup.

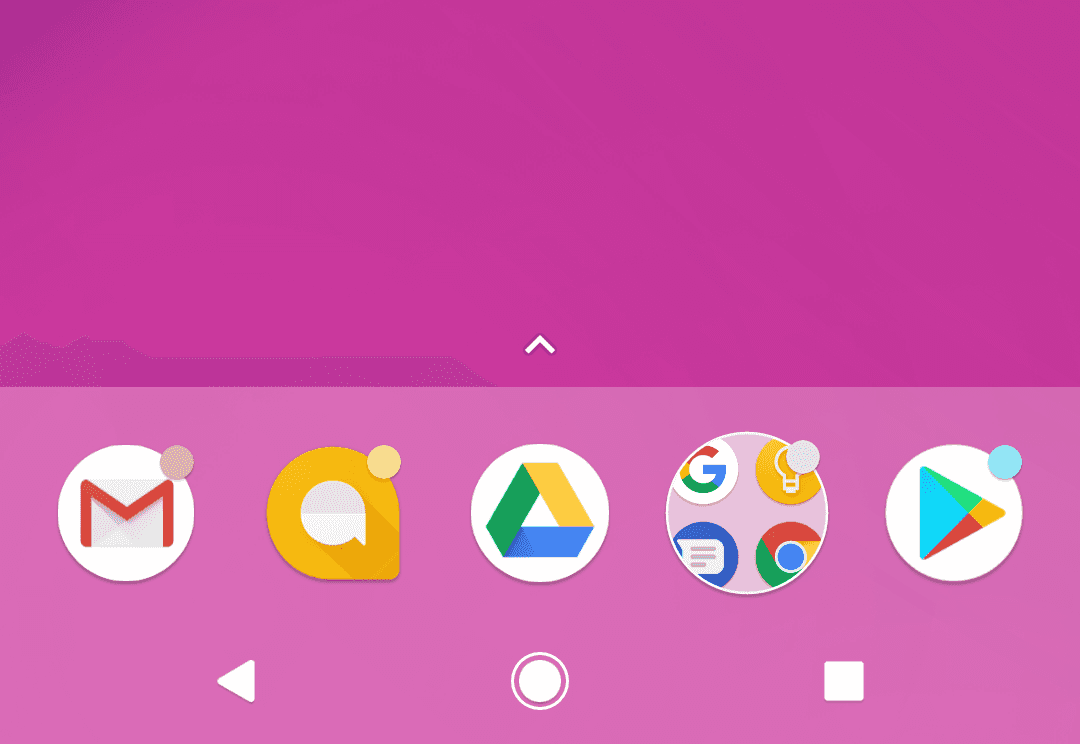

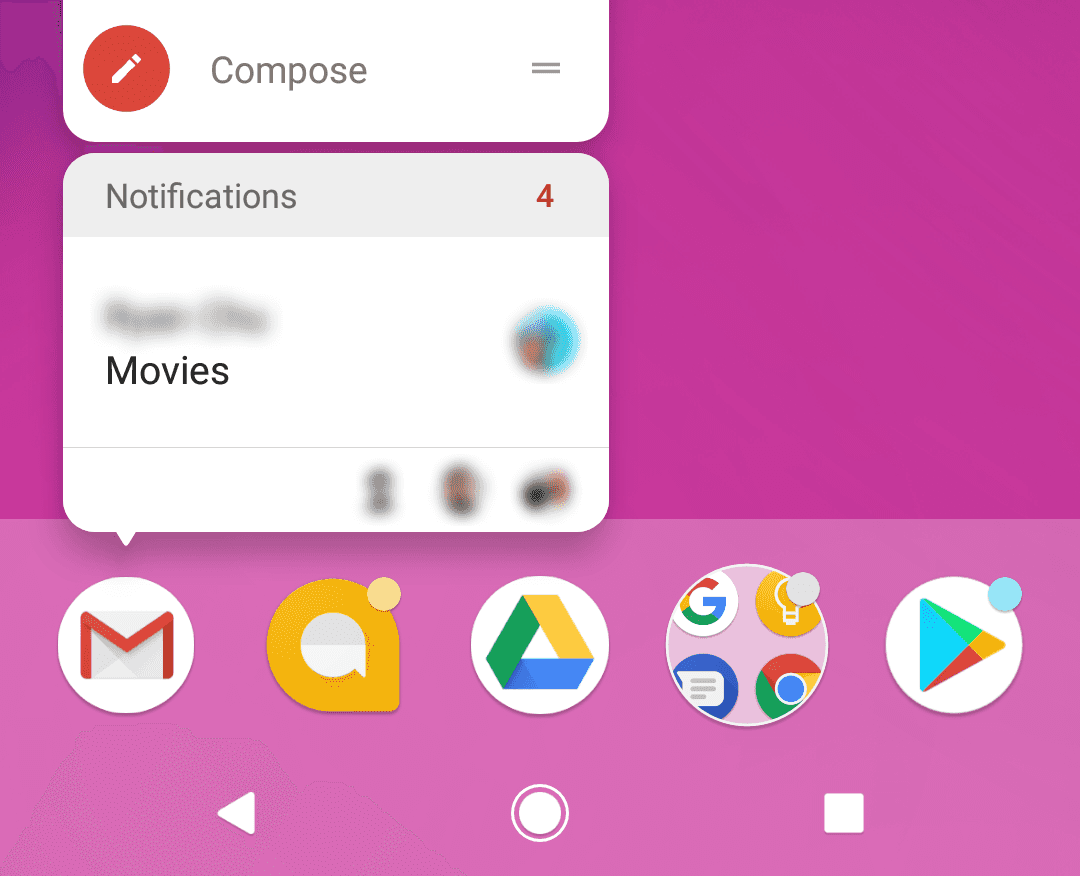

Notification Badges

One of the coolest features in this release of Android is the introduction of an app badge (similar to iOS). Also known as notification dots, they will appear on the top right corner of the app icon and will allow users to quickly see that there are notifications that were not yet dismissed or acted upon.

This interaction between users and your app icon brings a whole new way of consuming content sent over push notifications. By long pressing the app icon, users can see unread messages in a glance, pretty much the same way they did in the notification center.

From Alt Beacon to Nearby API

One of the things we are changing soon is the ability for your app to make use of beacon capabilities without the need for an external library like it was the case with the Alt Beacon library. By switching to the Nearby API you will continue to enjoy iBeacon capabilities in your apps straight from Google APIs, making it even easier for developers to tap into this functionality.

AI-first approach

It seemed like a pretty consistent trend in this year's Google I/O: Google wants to be your assistant that sees and understands the world around you. Android now runs on more than 2 billion devices across a multitude of smartphones, tablets, wearables, TVs, cars and even your in your home. It's clear that Google's powerhouse of data and knowledge will enable Android devices to do smarter things. Artificial intelligence became truly important for Google to the point that it is introducing a second generation of its tensor processing unit (TPU) chip. This will enable the Google Cloud for faster training and running of AI models.

WWDC

Oh yeah, the Cupertino company also announced new versions of their major platforms. iOS, tvOS, watchOS and macOS are being updated this fall with a lot of new stuff. Besides the new iPad Pro and new iMacs, Apple also introduced the HomePod, entering the race for your living room and directly competing with Google's Home and Amazon's Alexa. Although no changes to notifications were introduced in the upcoming release (something our team is always on the look out for), there's a couple of things you will have to take in consideration when releasing your apps.

Location Privacy in Apps

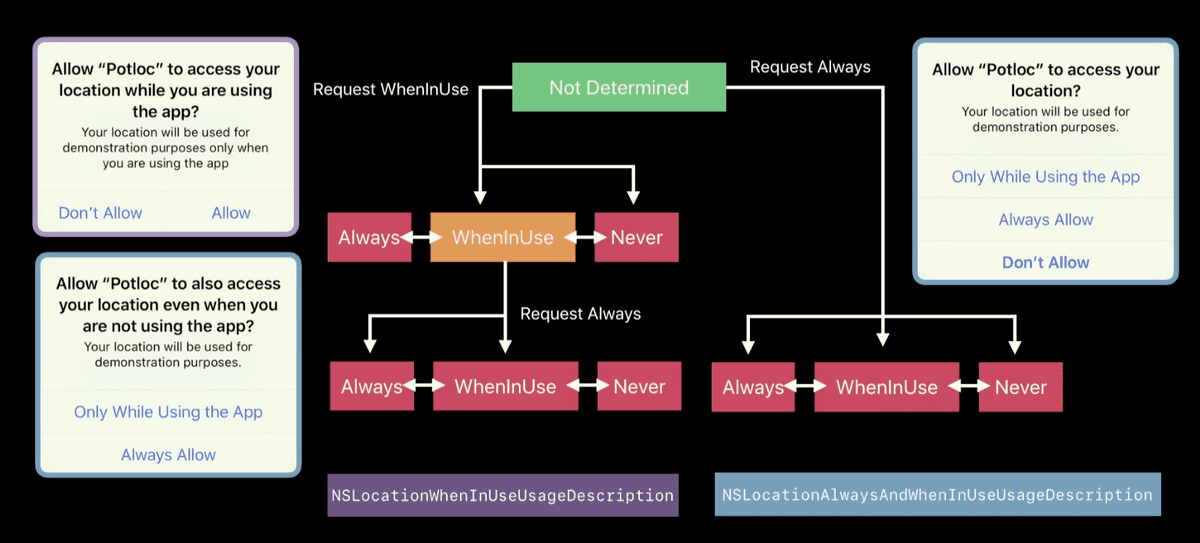

Apple's iOS 11 is giving more control to users over the way apps collect location information. If your app tracks location, it will be affected by the introduction of a new option in the permission dialog which will enable location only when the app is in use.

For most use cases, allowing your app to only make use of location when it's being used makes sense, but for most of you, it will influence the way it gathers location in the background making it impossible for your app to track significant location changes and consequently send notifications whenever users visit your stores and venues. It's more important than ever that the on-boarding and explanation of these features is clear and that you demonstrate the benefits of always sharing location with you.

Core Location improvements

Besides giving more control to users, Apple is also introducing some great new powerful, location-based features that we will make make use of in our SDK. One of the most important new features is the Indoor Location capabilities. This will translate into a better understanding of the user's position when inside a building, allowing us to reliably collect location positioning data like floor transitions and aisle navigation.

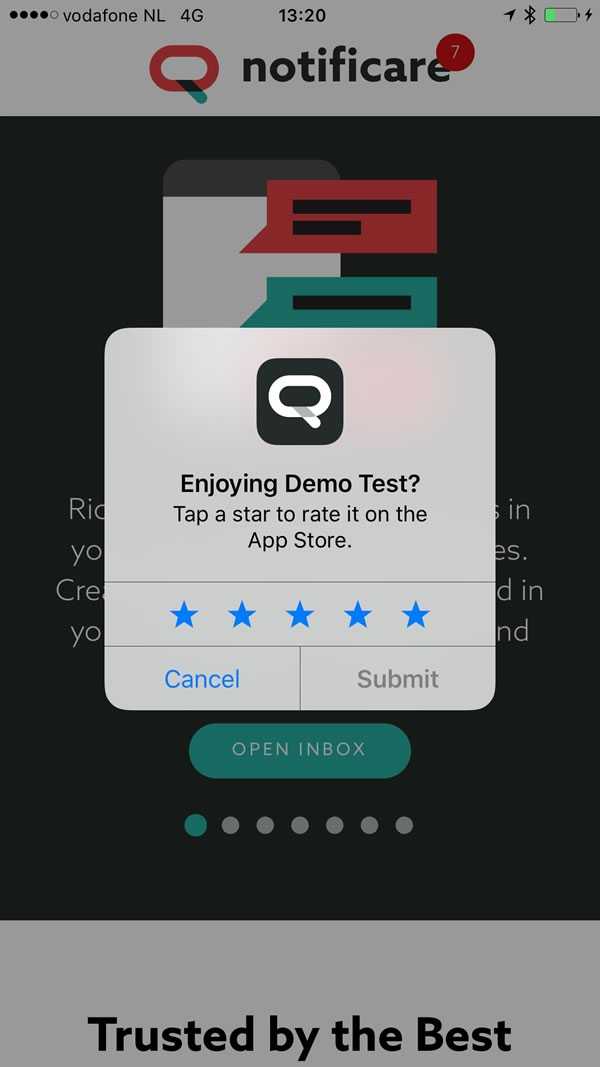

Better Rate API

In 10.3 Apple introduced a new Rate API, allowing your app to request users to leave a 5 star rating from within your app. Our current SDK already adopted this new view whenever you select the Rate App notification type. Now, in iOS 11, Apple is adding some constraints on how often you ask for these recommendations. According to Apple, apps are only allowed to present this view 3 times a year and once a user actually rates your app, then you will only be able to present this view again after a year. Moreover, Apple is also putting all the control on the user side, who might disable this feature altogether from the device settings.

Core NFC

Something we were waiting since Apple Pay was introduced and finally it's making its way to your apps. Apple will now provide apps with the capability to read NFC tags which will translate into powerful new functionalities inside our own SDK. This is something we could already do in Android for sometime but never made its way to our SDKs due to lack of support from Apple. In our future releases, your users will be able to automatically unlock content whenever in contact with these tags in your stores or venues. You will then be able to make use of our dashboard to setup these new interactions allowing for better product promotions, distribution of digital cards, etc.

More on device processing

In one single iOS release, Apple is packing great new APIs for smarter on-device data processing allowing developers to do more with the powerful hardware they have in their pockets. This will also translate into better and faster data processing on-device in Notificare's SDKs. Using this new range of APIs just makes sense for us and we will progressively tap into Core ML, Vision Kit and NLP to provide smarter contextual functionality.

Getting Ready

We are currently preparing our next 1.10 release which will pack all this functionality for you in our native libraries as well as our several framework plugins. Subscribe to our newsletter and get notified whenever this new SDKs are released.